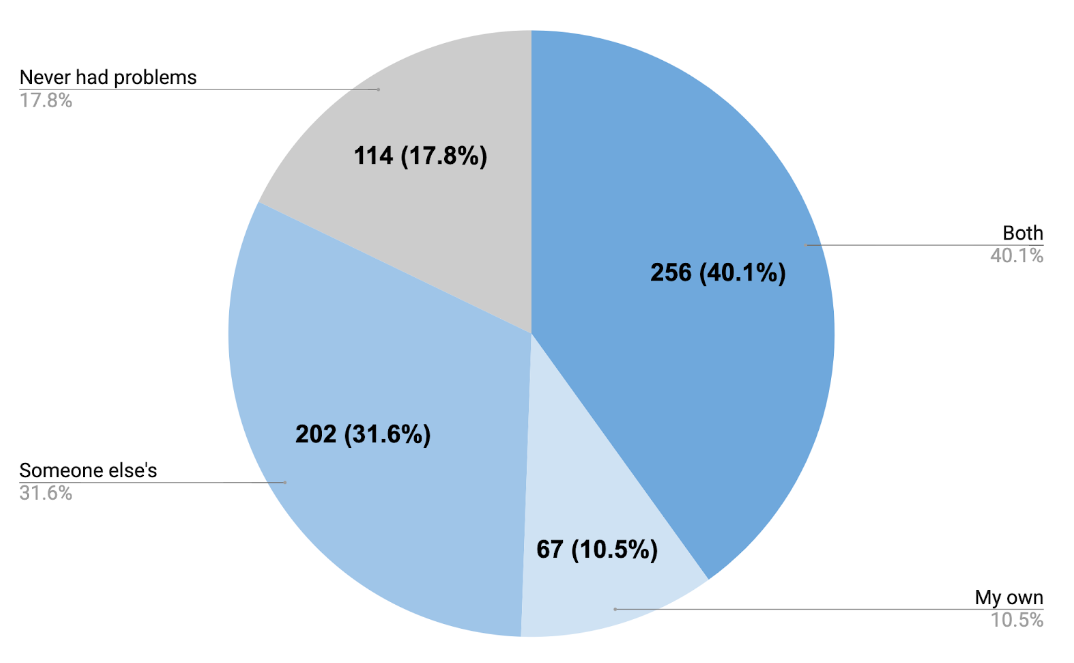

Reproducibility of scientific findings leads to faster progress and innovation. In an effort to understand the motivations of scientists that attend reproducibility training events, instructors running these reproducibility workshops through Reproducibility for Everyone conducted a voluntary survey of participants between 2020 and 2022 (n=639). The findings provide insights into the experiences of researchers and highlights how educational motivations can commonly be rooted in personal struggles. Our survey discovered that 82% of participants have have struggled to reproduce research results:

- 72% have struggled to reproduce someone else’s research results, and

- 50% have struggled with reproducing their own research results, and

- 40% have struggled to reproduce both their research and someone else’s.

So what motivates scientists to attend reproducibility training?

1. Avoiding a rabbit hole of frustration.

Frustration is a powerful motivator driving scientists to seek reproducibility training. When asked to anonymously share their experiences with reproducibility, one participant shared their experience grappling with conflicting results:

“My first project in grad school, I was asked to continue the work a grad student and staff scientist had gotten. My advisor provided me with 2 slides of their previous data. At n=4, my results were opposite of what they had found. My advisor wouldn’t accept my answer even though the previous results were of n=1. I spent 1 year going down the rabbit hole and switched projects in the end. I was never able to reproduce their work.”

2. Investing time in training to avoid future time lost.

Time is a precious resource in scientific research, and spending time attempting to reproduce data can be disheartening. As one participant put it, they “lost months of work trying to reproduce data.” Learning about reproducibility also takes time, but this participant was motivated by the promise of saving time in the long run.

3. Avoiding methods that do not work.

A lack of reliable methods can hinder scientific progress. One participant recounted the difficulties their lab faced in reproducing a novel technique:

“A graduate student in my lab started a project that developed a new technique. He eventually received a Science paper using this technique to collect data. A second graduate student came in and was unable to get the same results with his technique. Eventually he dropped the project. I then joined and tried my hand at a different project that also used the same technique. I also failed to replicate similar results with the technique. A fourth individual, this time a postdoc, joined the lab and also struggled with the technique. Ultimately, no one has ever repeated the results of the original student that published a Science paper on the results.”

This story underscores the need for researchers to collaborate, refine, and share reproducible methods.

4. Not restarting from scratch.

Improving documentation can help confusion when your most likely collaborator – your future self – tries to reproduce your results. One participant shared their struggle in writing a methods section:

“It was very hard to write up a methods section once because I was not good at the time at keeping records of what I’d done – I had the results files from bioinformatics analysis but no clue how I’d gotten them (software used, let alone what settings, what version). Took some extensive sleuthing to reconstruct.”

5. Leaving methods in a better state than you found them.

Missing information can impede the comparison and adoption of new methods. As one participant explained:

“Currently working on comparing our computational method to other existing methods, and it’s taken probably a year to get them all working, including rewriting parts of their computational pipelines.”

By learning how to document and share methods in a reproducible way at their training, this researcher can ensure that future researchers can quickly implement the best tools for their research.

The experiences of scientists as they work through real reproducibility challenges motivates them to elevate the quality and reliability of their research. By investing in reproducibility training and committing ourselves to continuous learning, we can ensure that scientific studies truly advance our collective knowledge. This is not just an opportunity for individual researchers, but for the scientific community as a whole, to come together, share experiences, and push the boundaries of what we can achieve.

April Clyburne-Sherin

April Clyburne-Sherin is co-founder and Executive Director of Reproducibility for Everyone (R4E), a community-led reproducibility education project for researchers. She is an epidemiologist and expert in open research tools, methods, training, and community stewardship. Since 2014, she has focused on creating curriculum and running workshops for scientists in open and reproducible research methods. She has developed and run short duration reproducibility training for many groups including the Center for Open Science, Sense About Science, and Code Ocean as well as consulting for SPARC, Code for Science & Society, Open Scholarship Knowledge Base, and CUNY.

Website: https://www.repro4everyone.org/

Leave a Reply